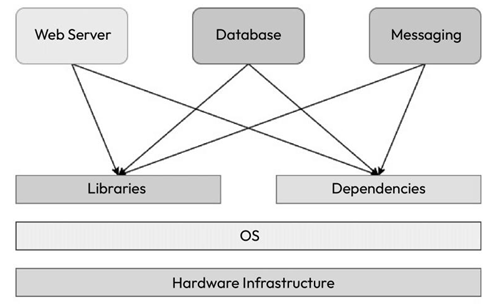

Let’s say you’re preparing a server that will run multiple applications for multiple teams. Now, assume that you don’t have a virtualized infrastructure and that you need to run everything on one physical machine, as shown in the following diagram:

Figure 1.3 – Applications on a physical server

One application uses one particular version of a dependency, while another application uses a different one, and you end up managing two versions of the same software in one system. When you scale your system to fit multiple applications, you will be managing hundreds of dependencies and various versions that cater to different applications. It will slowly turn out to be unmanageable within one physical system. This scenario is known as thematrix of hell in popular computing nomenclature.

Multiple solutions come out of the matrix of hell, but there are two notable technological contributions

– virtual machines and containers.

Virtual machines

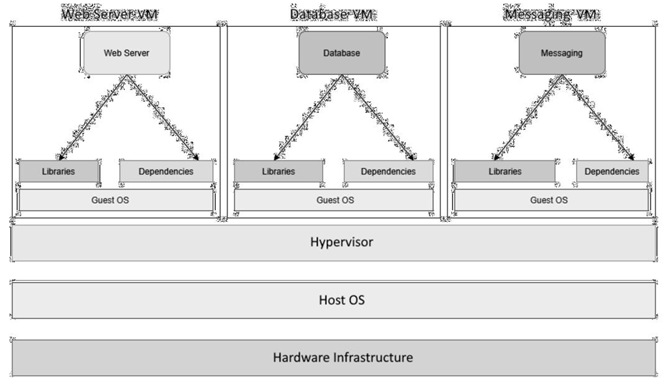

A virtual machine emulates an OS using a technology called a hypervisor. A hypervisor can run as software on a physical host OS or run as firmware on a bare- metal machine. Virtual machines run as a virtual guest OS on the hypervisor. With this technology, you can subdivide a sizeable physical machine into multiple smaller virtual machines, each catering to a particular application. This has revolutionized computing infrastructure for almost two decades and is still in use today. Some of the most popular hypervisors on the market are VMware and Oracle VirtualBox.

The following diagram shows the same stack on virtual machines. You can see that each application now contains a dedicated guest OS, each of which has its own libraries and dependencies:

Figure 1.4 – Applications on virtual machines

Though the approach is acceptable, it is like using an entire ship for your goods rather than a simple container from the shipping container analogy. Virtual machines are heavy on resources as you need a heavy guest OS layer to isolate applications rather than something more lightweight. We need to allocate dedicated CPU and memory to a virtual machine; resource sharing is suboptimal since people tend to overprovision virtual machines to cater to peak load. They are also slower to start, and virtual machine scaling is traditionally more cumbersome as multiple moving parts and technologies are involved. Therefore, automating horizontal scaling (handling more traffic from users by adding more machines to the resource pool) using virtual machines is not very straightforward. Also, sysadmins now have to deal with multiple servers rather than numerous libraries and dependencies in one. It is better than before, but it is not optimal from a compute resource point of view.